Import and log your dataset CSV file

We suggest you use W&B Artifacts to make the contents of the CSV file easier to re-use.- To get started, first import your CSV file. In the following code snippet, replace the

iris.csvfilename with the name of your CSV filename:

- Convert the CSV file to a W&B Table to utilize W&B Dashboards.

- Next, create a W&B Artifact and add the table to the Artifact:

- Lastly, start a new W&B Run to track and log to W&B with

wandb.init:

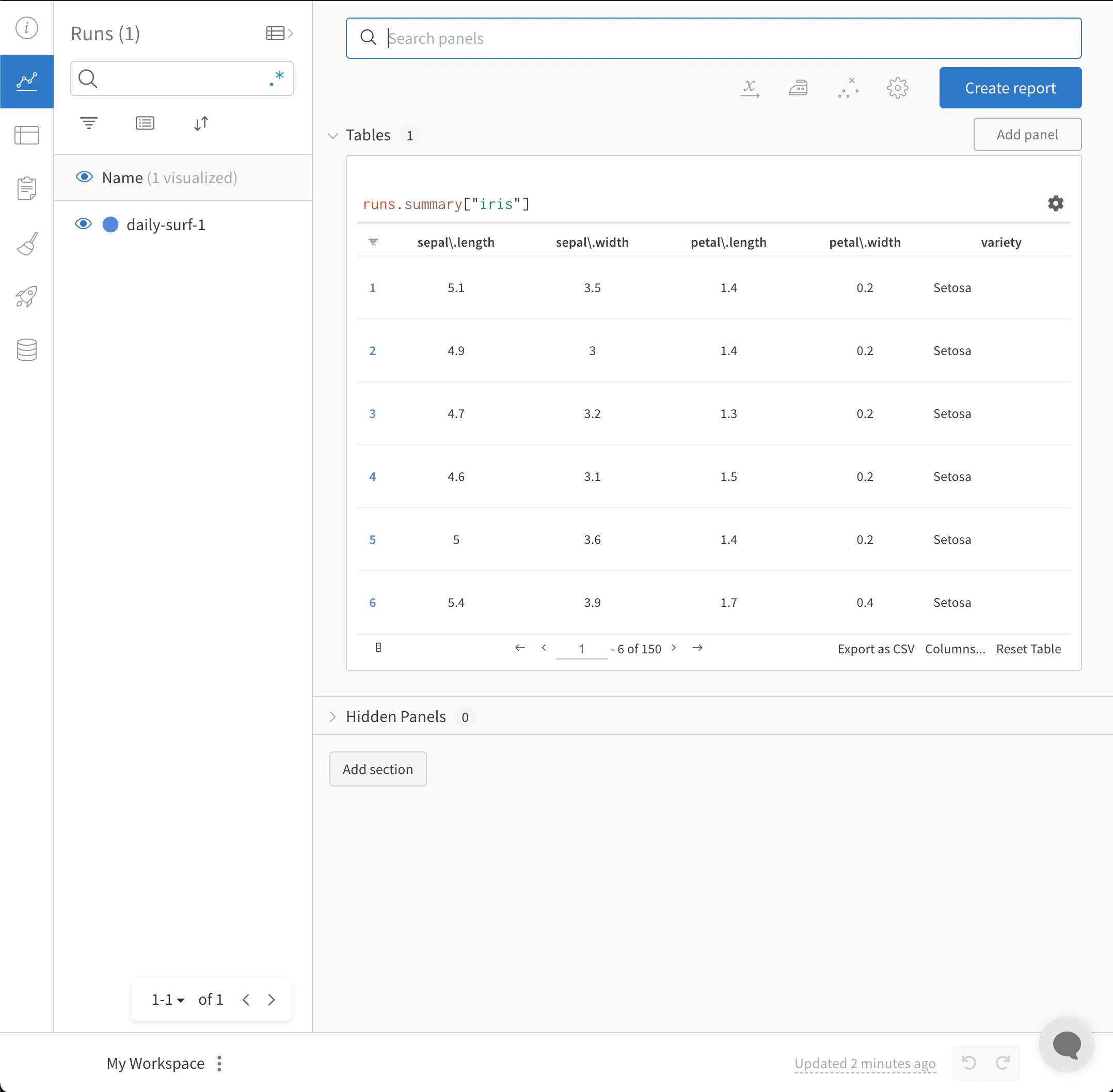

wandb.init() API spawns a new background process to log data to a Run, and it synchronizes data to wandb.ai (by default). View live visualizations on your W&B Workspace Dashboard. The following image demonstrates the output of the code snippet demonstration.

Import and log your CSV of Experiments

In some cases, you might have your experiment details in a CSV file. Common details found in such CSV files include:- A name for the experiment run

- Initial notes

- Tags to differentiate the experiments

- Configurations needed for your experiment (with the added benefit of being able to utilize our Sweeps Hyperparameter Tuning).

| Experiment | Model Name | Notes | Tags | Num Layers | Final Train Acc | Final Val Acc | Training Losses |

|---|---|---|---|---|---|---|---|

| Experiment 1 | mnist-300-layers | Overfit way too much on training data | [latest] | 300 | 0.99 | 0.90 | [0.55, 0.45, 0.44, 0.42, 0.40, 0.39] |

| Experiment 2 | mnist-250-layers | Current best model | [prod, best] | 250 | 0.95 | 0.96 | [0.55, 0.45, 0.44, 0.42, 0.40, 0.39] |

| Experiment 3 | mnist-200-layers | Did worse than the baseline model. Need to debug | [debug] | 200 | 0.76 | 0.70 | [0.55, 0.45, 0.44, 0.42, 0.40, 0.39] |

| … | … | … | … | … | … | … | |

| Experiment N | mnist-X-layers | NOTES | … | … | … | … | […, …] |

- To get started, first read in your CSV file and convert it into a Pandas DataFrame. Replace

"experiments.csv"with the name of your CSV file:

-

Next, start a new W&B Run to track and log to W&B with

wandb.init():

run.log() command to accomplish this:

define_metric API. This example adds the summary metrics to our run with run.summary.update():